The Patent Programmer-Practitioner: Lift Hills and Drops on the AI Forevercoaster

This is a longish, and long-overdue post with some of my thoughts (aka lightly edited brain dump/screed) on developing and using agentic AI for patent practice.

How it Started

commit 59014c091efa219987779af1c0844c4aa0304ee0

Author: Noah K. Tilton <code@tilton.co>

Date: Sun Jan 7 17:00:08 2024 -0600

blah

I made this initial commit on a Sunday afternoon with a listless message I use when I’m too lazy or tired to type a descriptive one. This is not usually a problem, because most of the software I write is not intended to be read or used by others. I have not worked as a professional software developer for over a decade, and as a patent prosecution partner with a robust practice, I still do not.

However, in late 2023, something changed that, looking back, was a momentous time in my journey as a patent practitioner. Over the holidays, a colleague repeatedly pestered me (in a good way) about ChatGPT, and specifically, whether it could be used in the practice of patent law.

In the nine months or so leading up to that time, during the initial GPT-3 era, I was like a lot of other people in the IP space – ambiently familiar with large language model (LLM) technology, but focused on other things. I had also developed a bit of trepidation toward LLMs, due to an uneasiness I had felt during early experimentation with GPT, that I was riding up a lift hill, and once I reached the crest and started the first precipitous drop, there would be no getting off. This is still the best metaphor I can conjure for the viral, exponential arrival of LLMs – as a rollercoaster ride that never ends.

I had some downtime over the holidays, and I usually code during that period since it’s cold, dark and I can’t sit still. Within a few weeks of being nagged by that persistent colleague, I had a working web app, later renamed OrdinarySkill.ai (OS), that performed a variety of automated LLM-based drafting and prosecution tasks. While I made OS available for use to others, I had no aspirations to be a software CEO. Any usefulness to others was purely incidental. Notwithstanding, over 1B tokens have been consumed by users of OS to date, for a variety of things.

In sum, OS was an early indicator of the disruptive potential that LLMs would have on the practice of patent law, and it was soon surpassed by more capable (read: more user-friendly) tooling. I continued working on it actively until Fall of 2025, and it dramatically accelerated aspects of my practice, which did not go unnoticed.

“Buy or Build?”

This is one of the questions that people began asking during this time. The romancing by any number of more or less credible SaaS providers has been intense. All offer some form of LLM-based patent workflow automation.

I developed an aversion to these spammy offers–which continue essentially unabated to this day–and to the COTS products behind them, some of which are provided by companies with very deep pockets, both well-established legal software players, and new entrants. The reasons for my gut-level response are numerous, and if you don’t care, skip this section. In no particular order:

-

I have never needed COTS to access an LLM, because I am comfortable writing code to access private language model APIs directly. Nothing stands between me and the model. I know, down to the token, exactly what is being fed into the model, and can reason explicitly about outputs with zero opacity (and no upcharge). Need to generate something specific and bespoke? Wrap custom prompts in a loop or a function. Need to change something? Add new functionality? I have access to the same agentic coding tools the COTS vendors do, and can knock features out in an afternoon. Or an hour. Or less. (More on this in short order.) I’ve been accused of “vibe coding”, and I am guilty as charged. I’m in good company.

-

Because the COTS patent tools are not programming environments (by definition and design), they are not flexible enough to accommodate all use cases. This level of control isn’t for everyone, but it’s unaddressed, and probably unaddressable by COTS software. Tooling created for all patent attorneys at all law firms attempts to be all things to everyone, and does a mediocre job at everything.

-

LLM systems are already non-deterministic by default, which makes reasoning about their outputs a hard problem. The last thing I want is well-intentioned minders of a walled garden adding more layers of uncertainty to the process. I have heard repeatedly from other frustrated practitioners riding the LLM rollercoaster that they simply cannot get COTS software to do what they want it to do, regardless of how exacting or precise their prompts have become.

-

Apart from reduced flexibility, there are legitimate questions around the value add of patent COTS over foundation models themselves, which remains dubious unless ease of use is of paramount importance (which it is in some cases). While most foundation models are effectively identical, harnesses are critical for getting useful results. And because harnesses are critical, they’re the last thing that should be outsourced.

-

No one knows what current patent law AI tool providers, who have at least theoretical access to some of the juiciest data on the planet, will do with it in the future. A federal judge recently ruled that a criminal defendant’s conversations with Claude were not covered by attorney-client privilege. Information shared with publicly-available LLMs appears to be a law enforcement treasure trove, on the order of networked automatic license plate readers or genetic ancestry databases in terms of its utility in building criminal cases. In that climate, introducing third party risk seems unwise, even if the parallel is a civil-equtiable context.

-

FOMO is not a reason. The Citrini/Shah paper linked below reads like alarmist bullshit, and some of it is, but the AI-Driven Economic Feedback Loop is 100% real. COTS adoption feels a lot more like following than leading.

-

Last but not least, there’s the issue of VC’s overt disintermediation of a proud legal tradition with roots reaching back to the founding of the country. Not all AI tools are VC backed, but I think it’s shortsighted to assist in this process by being a passive consumer of COTS patent AI software. I’m not really interested in helping to train my replacement.

It felt very good to get all of that off my chest, and I hope you do not feel personally attacked, dear reader. I think it’s important, since I’m discussing my vocation, to remind you that this blog represents my opinion only, and I do not speak for anyone else (see the disclaimer, below). However, I think this is important context for where we’re headed.

Assuming patent COTS tools are an antipattern for the Patent Programmer-Practitioner, what does that leave one to do?

The Real MacKay

This is really a blog post about how LLM technology is truly indistinguishable from magic, and how embracing it over the past few years has given me a Christmas morning-like excitement that I haven’t felt about a technology since I started teaching myself RedHat Linux in 2003. Before that I’d probably have to revisit the first few months I spent logging on to AOL in the early ’90s to find similar enthusiasm for a technology. As far as digital technologies go, LLMs are the Real McCoy.

And a lot has been written recently about the disruption posed by “AI.” I use the term advisedly, as one should–as Rusty Foster correctly points out, AI isn’t people, and anthropomorphizing generative AI in the form of LLMs can be a costly category error, because it blurs the line between a piece of software and a human being. Yet some of the more hyped up prose implies that this is a distinction without a difference, because LLM output “quality has reached a point where many professionals can’t distinguish [it] from human work.” But “many professionals” isn’t the same as “all.” It’s certainly not “all patent examiners.”

If you are looking for semantically ablated text, you’ll find mounds of it. The syntax hallmarks of AI slop are unmistakable. You might not care if you are booking a plane ticket. But if you are drafting a patent claim, you should.

So while they are the Real McCoy, at this point, it’s probably also correct, especially if you are using them in the practice of law, to think of LLMs as stochastic parrots and blurry JPEGs of reality, and act accordingly. There are much more colorful descriptors, if you wish.

Despite their rough edges, LLMs are the best learning platform humanity has ever assembled, apart from other humans, and are lowering the innovation bar across the board, in every domain you can think of, including mathematics.

In my case, it’s motorcycles. At least this quarter. I decided to start work on an antique rehabilitation project, and have recently used LLMs to teach myself about bonded titles (joke’s on me), 4-cylinder carburetor rebuilding, and 3D printing. These are topics about which I knew next to nothing, until 45 days ago.

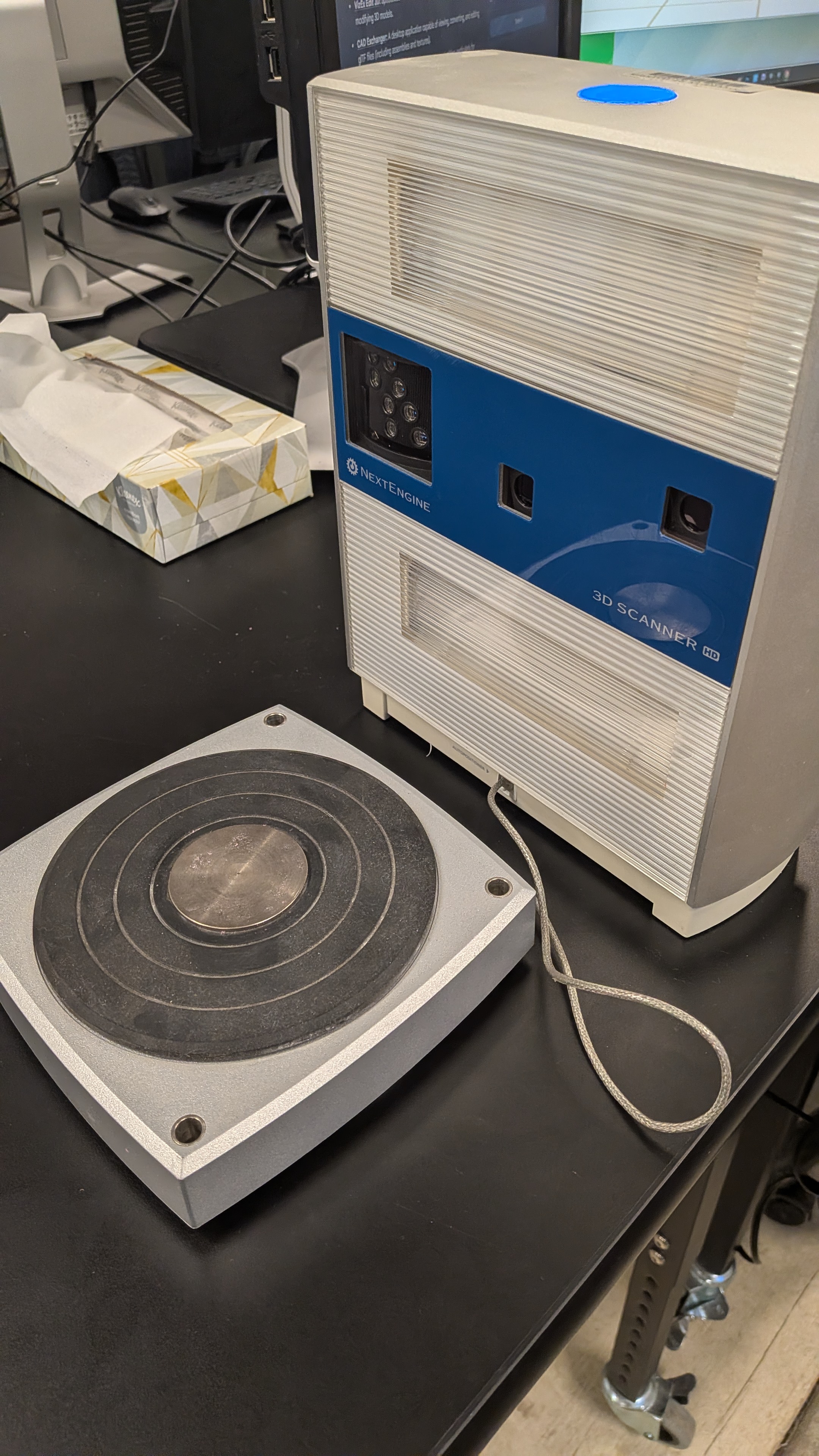

My cover story is that I can use the bike to teach my kids about engines. In connection with this we recently visited the UIUC Fab Lab (shoutout to Michael and co.) intending to use their 3D scanner for a part that would be $50 on eBay. It turns out that the 3D scanner device manufacturer had gone poof, along with the software for it.

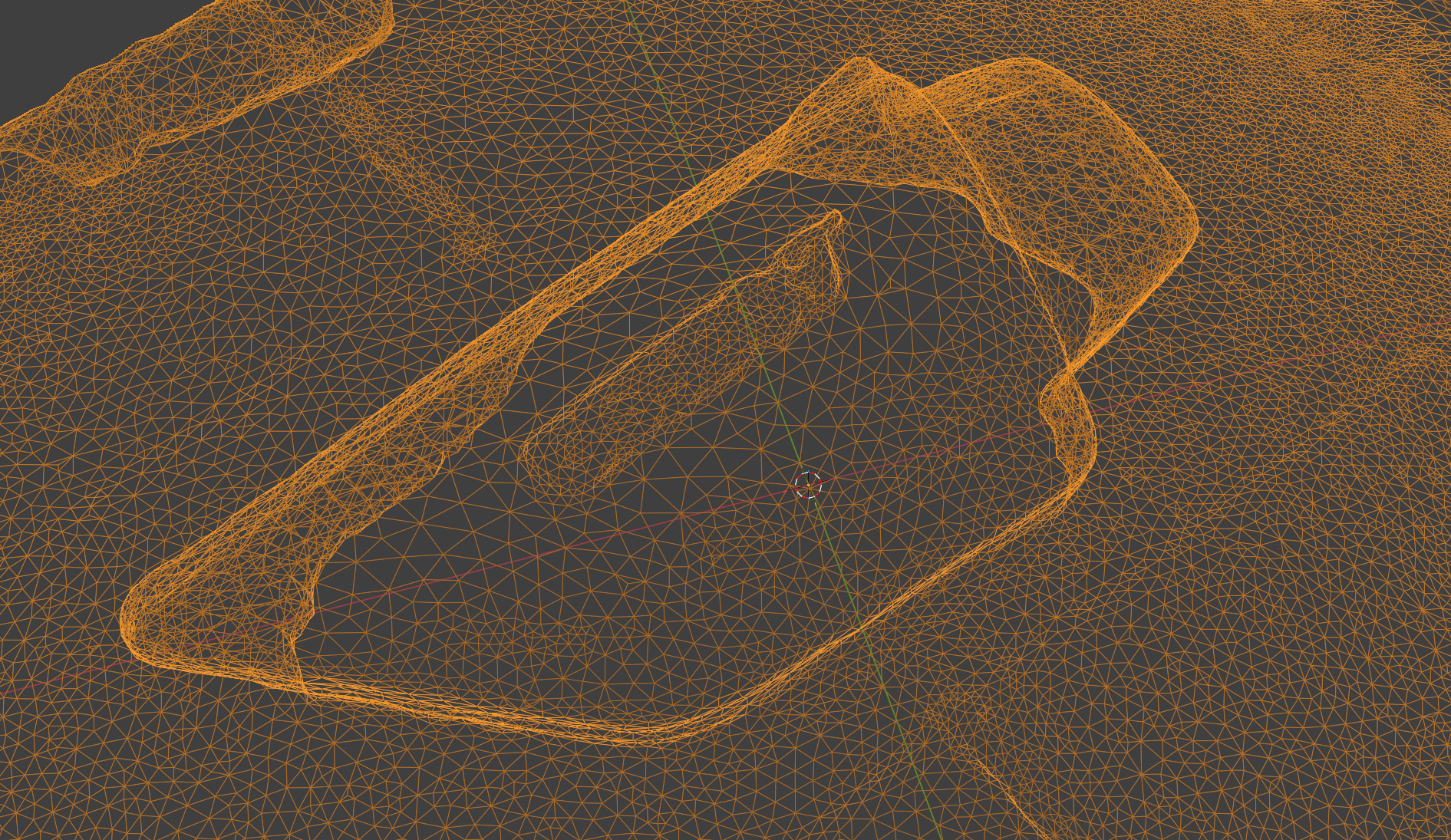

Undeterred, we fired up Polycam–an amazing piece of software in its own right–and were able to quickly capture a 3D model of the complex part we wanted to print, in glTF 2.0, using our phones. We then loaded that file into Blender (this decision was made for us, because glTF is the only free export format of polycam, and Blender supports glTF well) and are now well on our way to learning enough Blender to get useful 3D prints, with almost no trial and error or RTFM.

It’s worth pausing to appreciate that this entire sequence of events is something I would never have had time for in a non-LLM world. And there are 1000 other examples of this type of self-directed learning, many of which I have taken for granted and already forgotten.

It’s also worth pausing to consider, what if polycam wasn’t a thing? What if there wasn’t a quick and easy alternative to the defunct 3D scanner? Could we have bypassed the NextEngine licensing server by reflashing the device? Written replacement firmware? What would the solution path look like? Could we point Claude Opus at it and go make a sandwich? Perhaps not entirely, but how much time would that have saved, compared to cracking it manually?

If there is a problem that needs solving, or an idea about which one has an inkling, or a project that seems a tad too ambitious, some initiative plus an LLM makes for a sufficiently more capable/ dangerous human. I am not the only one whose initial AI skepticism has been won over by recent advances in agentic AI discourse, especially where software is concerned.

Another recent example, beyond motorcycles, is how quickly things I already know how to do can be significantly sped up. For some of my clients, I need to keep up with new small arms technologies and trends in that industry. So I created GunClaims, a microsite that processes the entire USPTO patent gazette every Tuesday, classifies the new patent issuances according to whether they are in the small arms/ammunition field, and publishes them in an easy-to-read format.

It took me about 8 hours total to put GunClaims together, from programming to deployment, and testing. That’s pretty amazing velocity from idea to clockwork execution. Of course, GunClaims could be done with any sub-specialty or subject matter interest, and it is far from perfect. I only mention it, as one example of the amplification potential in using LLMs to automate tasks that might otherwise exist only as mere passing thoughts.

Agentic Schmagentic (A very complicated and nuanced story)

In this moment, we are well on our way in the agentic era of the LLM

rollout. In simple terms, an “agentic” model refers to one that has

state (memory), access to tools, and which runs in a loop to act

autonomously in furtherance of a goal. That goal–which can be

expressed in multi-modal terms–could be something

like “create firmware for this defunct 3D scanner.” Or “explain where

this pilot jet goes in relation to the float arm.” Or

“draft an office action response” or “draft a first draft of a patent

application.” However, because $goal can be just about anything,

there has been much rending

of

garments

and outright doomerism over agentic technology (some of which may be correct).

The economic consequences of the transformation to an agentic world are less

clear.

Despite this, not everyone believes the sky is falling. Labor substitution is still difficult, and bottlenecks still exist (often by design). A more sober report from Citadel in response to the Citrini piece makes the case that AI is a productivity shock, not a demand shock, and notes that SWE jobs are rapidly rising while human demand for new technologies remains elastic. Others have observed that software developers are basically cockroaches.

I think Terry Tao gets it right, discussing the application of Generative AI to the Erdős Problems:

There’s a big crowd of people who really, really want AI success stories. And then there’s an equal and opposite crowd of people who want to dismiss all AI progress. And what we have is a very complicated and nuanced story in between.

Regardless of which prognostications are correct, the truth of the matter is that the agentic LLMs are already extremely impressive, in certain narrow bands. Thus, there does not seem to be any question that LLM tech will be disruptive of existing white collar labor practices. The question is one of degree. But the first and most notable disruption to date has been to code generation, a.k.a. computer programming, a.k.a. software engineering.

Jetpacks, Baby!

Returning to my Git commit above, for the first year or so of developing OS, I was writing code as I had for 20 years – in a text editor, with code assistant niceties (syntax highlighting, static analysis, autocomplete and an LSP server). A year later, I had worked up the nerve to use ChatGPT to explore coding ideas piecemeal, evaluating the outputs and manually copy-pasting them into my editor, before cleaning them up and testing them. At this point, I was still facing down the same trepidation and unease I had felt when first touching these models, something that I was not alone in feeling. That rollercoaster looks massively tall…

Mid-October of last year marked the agentic inflection point in my use of LLMs. After hearing a friend rave about Cursor, I finally broke down and installed Visual Studio so I could dip my toes into Claude and Codex. Whoosh! This rollercoaster has a jetpack! I was hooked.

…And that was an older version of Codex (which I settled on using, due to inertia and familiarity). Newer versions of Claude/Codex are even more impressive, and are good at making (sometimes improved) copies of other highly complex things, like JavaScript frameworks and C Compilers. They can also do this very swiftly, compared to traditional software development practices.

Chris Lattner’s analysis of the Anthropic C compiler project is quite revealing of current agentic capabilities. The technical capability conclusions of that article are worth internalizing. I discuss them in more detail below. More directly relevant to the practice of patent law, Chris noted:

IP Law and Proprietary Software Moats

The Claude C Compiler also raises important yet uncomfortable questions about intellectual property. If AI systems trained on decades of publicly available code can reproduce familiar structures, patterns, and even specific implementations, where exactly is the boundary between learning and copying?

This is an excellent observation, and an illustration of an issue (LLM-based copying) that has been on IP professionals’ radars for a while now. Similar concerns touched off (another) SaaS-pocalypse in markets over the last few weeks. Suffice to say, IP law is rapidly evolving in this area, and markets are–rightfully–jittery, as agentic capabilities have arrived.

Of Vibe Drafters and Agentic Patent Practitioners, or How I Am Using Agentic AI in Patent Practice

So how am I using agentic AI in patent practice?

The shortest answer is the following analogy:

coding agents : software engineering :: drafting agents : patent practice

The slightly longer answer involves a two-part experiment:

-

Find a software practitioner blog post about how they are using agentic AI for software development.

-

apply the following word replacements:

from -> to code draft software patent programmer, engineer, etc. patent practitioner

The result of this word replacement experiment is likely as good an answer to the question as any other.

Take for example the introductory paragraphs of Simon Willison’s recent post on Agentic Engineering Patterns (strikethrough and underline showing above substitutions):

Writing about Agentic

EngineeringPatent Practice PatternsI’ve started a new project to collect and document Agentic

EngineeringPatent Practice Patterns—codingdrafting practices and patterns to help get the best results out of this new era ofcodingdrafting agent development we find ourselves entering.I’m using Agentic

EngineeringPatent Practice to refer to buildingsoftwarepatents usingcodingdrafting agents—tools like Claude Code and OpenAI Codex, where the defining feature is that they can both generate and executecodea draft—allowing them to test thatcodedraft and iterate on it independently of turn-by-turn guidance from their human supervisor.I think of vibe

codingdrafting using its original definition ofcodingdrafting where you pay no attention to thecodedraft at all, which today is often associated withnon-programmersnon-practitioners using LLMs to writecodea draft.Agentic

EngineeringPatent Practice represents the other end of the scale: professionalsoftware engineerspatent practitioners usingcodingdrafting agents to improve and accelerate their work by amplifying their existing expertise.

With sincere apologies to Simon, the rhetorical point here is pretty clear: that this fun little experiment works so well demonstrates the near-total convergence between the cognitive tasks of professional software engineers and patent practitioners, as far as LLM tooling is concerned. The only difference is the technological domain, and credentials.

One caveat/clarification: there are important distinctions between the instances of coding model providers that are available from given different tenancy configurations/ paid membership tiers, that are beyond the scope of this post (except to say, it’s essential that vetting and diligence be done to avoid privilege waiver or other issues). I am not endorsing any particular model provider.

I’ll be writing more about my free/open source agentic patent software stack in the coming days, so please stay tuned for that. For now it suffices to say, you know you’re on the bleeding edge when you are impatiently patching code to fix bug reports that are only a few hours old.

Merging, but Not Emerging (?)

Returning to Chris Lattner’s piece from about the Claude C compiler, I suggest that reading it again, but this time, imagine that instead of a C compiler, he’s talking about a patent application drafting system (or whichever hairy knowledge artifact you please). Here are some of the things you might have read in that case, from his main takeaways (edited using my replacement table above, and graded by me for anecdotal accuracy):

-

AI has moved beyond writing small snippets of drafts and is beginning to participate in drafting large patent applications (True)

-

AI is crossing from local draft generation into global practitioner participation (True)

-

Our legal apparatus frequently lags behind technology progress, and AI is pushing legal boundaries. Is private patent practice cooked? (Maybe)

-

Good drafting depends on judgment, communication, and clear abstraction. AI has amplified this. (True)

-

AI drafting is automation of implementation, so design and stewardship become more important. (True)

-

Manual rewrites and translation work are becoming AI-native tasks, automating a large category of drafting effort. (True)

-

AI, used right, should produce better drafts, provided humans actually spend more energy on architecture, design, and innovation. (True)

-

Architecture documentation has become infrastructure as AI systems amplify well-structured knowledge while punishing undocumented systems. (True)

Perhaps the most interesting takeaway of Chris’s post (unedited) is that:

AI systems can internalize the textbook knowledge of a field and apply it coherently at scale. AI can now reliably operate within established engineering practice. This is a genuine milestone that removes much of the drudgery of repetition and allows engineers to start closer to the state of the art. But it also highlights an important limitation of this work:

Implementing known abstractions is not the same as inventing new ones. I see nothing novel in this implementation.

Change “engineering” to “lawyering” or “accountancy” and see how it feels. I think it’s about right, including that agentic systems are–currently–pattern-limited, and lacking truly creative emergent intelligence.

Chris then hits the nail directly on the head:

As implementation becomes cheaper, the role of engineers shifts upward. The scarce skills become choosing the right abstractions, defining meaningful problems, and designing systems that humans and AI can evolve together. This will increasingly blur the boundary between software engineering and product thinking.

The merger will not be televised.

It’s All One Song

The merger between software development and patent practice (and other knowledge tasks) shows itself in funny ways.

Example: I was recently offered a guest column by a major financial publication, and I spent a couple of days thinking about the topic and writing about my perspective. The copy was like this, but shorter and a lot more sterile. It used the phrase “jagged edge” (with attribution).

The editor refused to publish it, saying my essay was “duplicative of recently published content.” At first I thought I was being accused of plagiarism, but then I decided to ask for the editor for clarification. What they really wanted, they said, was something more focused on “my specific experience in developing an AI solution, along with lessons learned/challenges observed that other AI innovators can appreciate.”

Well, this is that specific experience, in rando blog form, and the lesson learned/ challenge observed is that we’re all on the same AI forevercoaster, and that wasn’t my original idea, either. On the off chance you’re that editor, I hope you don’t find this content overly duplicative, but also, who needs an editor (!?) and, you’re welcome (not getting those non-billable hours back).

Agentic Patent Practice: Inferences from Other Disciplines

Having established that agentic patent practice is a horse of a different color, but still a horse, there are endless lessons that we Agentic Patent Practitioners can take away from the “first wavers”–those whose disciplines have been so profoundly affected by the rise of agentic machines–and their useful adaptations. The aforementioned agentic engineering guide discusses several additional principles and patterns that are directly applicable to Agentic Patent Practice, and is a good place to start.

“Knowledge Priming” is another useful pattern, that involves systematically parameterizing the agent with information it needs to generate better outputs.

Several others have written more or less formally about how they are using agentic AI systems more systematically to improve results.

Terry Tao is instructive here, too. He observed that current models are successful at eliminating tedium and searching the long tail of problem spaces, and at enabling population-level analysis, while expressing healthy skepticism of turnkey agentic workflows:

[A] lot of AI companies have this obsession with push-of-a-button, completely autonomous workflows where you give your task to the AI, and then you just go have a coffee, and you come back and the problem is solved. That’s actually not ideal. With difficult problems, you really want a conversation between humans and AI. And the AI companies are not really facilitating that.

This rings true.

My overarching point is that patent practitioners should be reading the experiences and absorbing learning from those who have gone before, and porting their adaptations to our practice.

Riding the AI Forevercoaster off into the Sunset (?)

Lately I’ve been thinking about how the ranking of app tokens in my MFA app determines the order of importance of each app to my life. There are a few token providers above my LLM providers on my phone, but the LLMs are close to the top–ahead of Amazon, Cloudflare, Firefox, Github, and social media, to name a few.

If your employer is not already forcing you to use AI, they probably will be soon. Indeed, if you are working a white collar job, and not retiring soon, facility with AI is not something you’ll be able to avoid.

It’s also true that AI makes everyone work more, and harder. As Siddhant Khare puts it,

AI reduces the cost of production but increases the cost of coordination, review, and decision-making. And those costs fall entirely on the human.

Recent research suggests essentially the same thing: that unfettered AI adoption can lead to “cognitive fatigue, burnout and weakened decision-making.” Khare makes some other good points about the inherent cognitive fatigue brought about by working with nondeterministic systems, the FOMO treadmill and prompt yak shaving. His solutions to these problems, and more, are worth reading. As are Steve Yegge’s recent thoughts on what he calls the AI Vampire. And those of Jennifer Moore on anchoring and vigilance.

These articles and others about cognitive debt make the point that the adoption of AI has forced many software developers who were formerly “doers” into “reviewers.” The same is true in the law. Gone are the days of keyboard jockeying, searching a thesaurus for the perfect next word while grinding out paragraphs of prose. Editing and revision are the main modalities now, even for newer practitioners. If you have used AI for even a short time, this is old hat.

For people just entering the practice, Terry Tao is a typical font of useful wisdom:

[Math] problems are like distant locations that you would hike to. And in the past, you would have to go on a journey. You can lay down trail markers that other people could follow, and you could make maps. AI tools are like taking a helicopter to drop you off at the site. You miss all the benefits of the journey itself. You just get right to the destination, which actually was only just a part of the value of solving these problems.

This is apt, and I would encourage newer practitioners to be wary of helicopter rides and other forms of convenience, lest they fall into the Sorcerer’s Apprentice Trap.

Better to stay firmly belted in to your coaster seat, keeping your arms and legs inside the ride at all times.